In the US, tower companies have enjoyed a 20-year growth tailwind that shows no signs of fatigue. The population of wireless subscribers has grown at a high single digit rate, from fewer than 130mn in 2001 to 440mn in 2018. In turn, network standards have evolved – from 2G (voice, SMS; 2000-2006) to 3G (mobile […]

[AMT – American Tower; CCI – Crown Castle] Legacy advantages and incremental returns

Posted By scuttleblurb On In [AMT] American Tower,[CCI] Crown Castle | Comments Disabled[MELI – MercadoLibre] Digging the Moat (Or Is It A Grave?): Part 3

Posted By scuttleblurb On In [MELI] MercadoLibre | Comments DisabledRelated posts [MELI – MercadoLibre] Digging the Moat (Or Is It A Grave?): Part 1 [MELI – MercadoLibre] Digging the Moat (Or Is It A Grave?): Part 2 In 2016, six years and several pro-competition pieces of regulation after the Central Bank of Brazil and antitrust authorities first pried the merchant acquiring market open to […]

[SHOP – Shopify; WIX – Wix] Platforms, scale economies, and technology

Posted By scuttleblurb On In [SHOP] Shopify,[WIX] Wix.com | Comments DisabledRelated posts: [Wix – Wix.com] Scaling profitably (November 2017) [GDDY – GoDaddy; VRSN – Verisign; EIGI – Endurance International] Value Migration in Web Services (May 2018) When I started looking into Wix more than two years ago, its capabilities seemed far beyond those of Weebly, Square Space, and several other competing site builders I was […]

scuttleblurb business update (2019)

Posted By scuttleblurb On In Business updates | 7 CommentsLike Tom Hanks sustaining dialog with a Wilson volleyball, I sometimes let myself believe that my words mean more to my readers than is certainly the case. For most of you, scuttleblurb is just one of many resources you consume to get smarter about companies. But for me, a guy who reads and writes most of the day in the quiet island of his attic, you play an active, multi-faceted role. You pay to read my posts, which makes you a customer; you challenge my assumptions and send me your research, which makes you a collaborator; you tell your friends about this blog and tweet my posts, which makes you my salesforce. And so, given what I see as your vital role in the growth and viability of this venture, I thought it only fair to let you in on what’s been taking place behind the scenes.

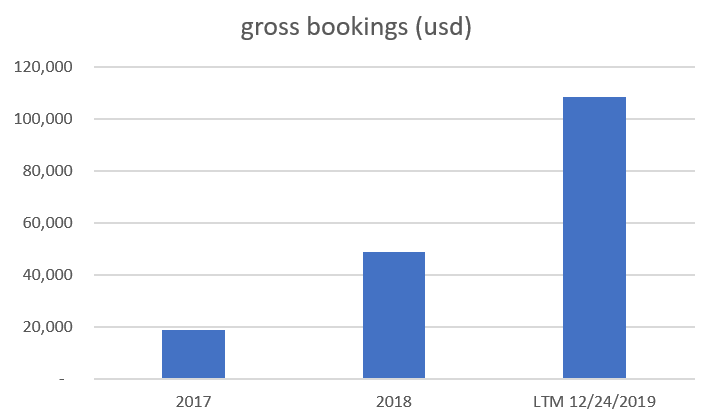

Here are my gross bookings over the last 3 years:

I’ve been adding around 25 to 30 new subscribers/month recently. This year, my annual churn rate was just under 11% and my largest expense by far was credit card processing fees, which amounted to 3.5%-4.0% of my gross billings. While scuttleblurb is not exactly crushing it, it’s building towards an income that seems good enough to support a modest lifestyle for a family of 4 in Portland, OR.

Being fine with “good enough” was something that hit me in my early 30s, after I had spent a decade building a career that was most definitely not supposed to culminate in me blogging in sweat shorts from an attic. Maybe I was preparing myself for fatherhood or maybe I was still rebelling against the bovine stupor of b-school recruiting, but at some point I stopped speculating abstractly about the limits of what I could achieve in my career and began thinking concretely about what I wanted my day-to-day to look like. It occurred to me that up until then, I had been conflating what I really wanted (to do intellectually stimulating work on my own terms; spend time with friends and family; and live proximate to nature) with some vague and cartoonish notion of who I thought I was supposed to be (a money guy with title and status).

scuttleblurb global HQ (Portland, OR; December 2019)

As this realization took hold, I considered where my abilities and obsession intersected with what other people might pay for, which narrowed the ways I could spend my time to 1/ investing and 2/ writing about companies.

The second option, writing about companies, was intended to partially stem the hemorrhaging of personal savings as I pursued the first. But the thing is, before investing OPM you must first convince other people to give you money. And if you don’t come from money, that usually takes a lot of time plus a Goldilocks blend of commercial acumen, salesmanship, and track record paired with some lucky breaks. I was unwilling to take fast money, but with a family to support, I couldn’t just wait around for patient capital that might never come.

Hence, scuttleblurb.

Writing is central to my investment process. I find it to be the most effective test of whether I really know what I think I know. It also jogs my mind and opens new avenues of exploration in a way that listing bullet points or checking off diligence items do not. So I figured if I was going to write for myself, I may as well see if others would pay to come along for the ride…which, in retrospect, was a rather arrogant thought given how many smart analysts publish their work for free. Andrew Walker’s yetanothervalueblog [4] comes to mind (his recent 3-parter on media is excellent), as do thoughtful writeups from John Hempton [5], Kyler Hasson [6], Mine Safety Disclosures [7], Ensemble Capital [8], Chris Mayer [9], and Bill Brewster [10]. You can find plenty of decent reports on Value Investors Club [11] too. Not to mention, several times a year, Greenhaven Road [12], Coho Capital, Upslope Capital [13], RGA Investment Advisors [14], and many other talented managers publish investor letters with insightful stock-specific opinions.

And how much should I charge? As I thought about comps, I found no reliable correlation between price and quality. Ben Thompson charges $100/year for a consistently high-quality newsletter, delivered 4 times/week. But then there are at least a handful of independent research shops that charge thousands/year for low insight copy-and-paste jobs festooned with DCF snapshots, sensitivity tables and other adornments to show you they mean business. I suppose they justify their lofty prices by offering actionable ideas that will yield many times what they charge. I have no problem with stock pitches (I do my fair share of them), but good ideas tend not to abide by arbitrary publishing schedules. And also, it’s not hard to see how getting paid to pitch might incent perverse outcomes…how one could be tempted, even subconsciously, to tweak a few paragraphs or ignore alternative perspectives so as to accentuate a clean, consistent narrative that stands a better shot, compared to a nuanced take, of attracting subscribers seeking unambiguously and immediately actionable stock tips.

The retail version of this – rags purporting to reveal “The Secret Stock Picks of Billionaire Investors” or inviting you to discover the “Top 10 Cannabis Stocks to Buy Right Now” – is far more toxic, as it lassos retail investors who may not possess the financial savvy to sniff out meretricious claims. These publications are ubiquitous and so scammy that I think many investors have understandably tuned out all investing related newsletters, especially low-priced ones like mine.

Scuttleblurb is currently priced at $210/year, where I expect it will remain for the foreseeable future. Why $210? Well, I figured if I charged thousands/year, subscribers might start to think of me as their on call personal analyst, which would spoil my quality of life and crowd out research time (of course, that’s assuming enough people would pay that much to read my blog, which I doubt). At the other extreme, given the niche appeal of my writeups, I didn’t think I could build a readership large enough to earn a living at a price below $100. $210 felt just low enough where if you enjoyed the blog, you wouldn’t feel weird casually telling your friends to subscribe and you wouldn’t think twice about renewing your own subscription. Now, it would be one hell of a coincidence if $210, a number I more or less picked at random, were the revenue optimizing price. I suppose I could tweak things in a way that captures more value, but frankly I just don’t care enough to bother. I’m fine with how things are going at $210.

I wasn’t so whimsical during the early dark days of scuttleblurb. The business was in a rather precarious state a few years ago. For months, I published into a vacuum, my posts attracting no subscribers, eliciting no feedback, nothing. I literally could not give away a subscription. Desperate to get on somebody’s radar, I emailed free username/passwords to fund managers, authors, and “pundits”. Nobody logged in. The first flickers of life were sparked by several ad campaigns that I ran on Market Folly in late 2016/early 2017. But it wasn’t until @LibertyRPF [15] and @BluegrassCap [16] tweeted a few of my posts that scuttleblurb found its audience and the blog began to take shape as viable business.

Who is that audience and where do they come from?

Based on the subset of scuttleblurb subscribers who filled out my survey, most of you are professional investors. Nearly 75% of you discovered the blog through word-of-mouth on Twitter and COFB. Another 11% were referred by friends and colleagues.

Scuttleblurb lives on subscriber recommendations and securing those recommendations requires a dedication to producing relevant, consistently high-quality work. There are no hacks.

Quality is tough to describe, but I think people who obsess over the same niche interest will generally agree on the extent to which it is present in a piece of writing or analysis pertaining to that interest. Relevance is harder to tackle because each reader is drawn to a unique combination of factors. Some are looking for compounders regardless of industry; some want compounders, but only capital light compounders or small cap compounders; others love payments (I have a certain dentist in mind! 😉) or just want to read about SaaS.

It’s often said that the internet has allowed countless creators of niche content to find a paying audience. But being niche in one area often means being broad somewhere else. Ben Thompson, for instance, is niche in that he focuses on technology, but broad in that he analyzes technology’s implications on politics, society, culture, and business. I am niche in that I limit my analysis to business models and competitive strategy, but broad in that I write about many different sectors, from software to aluminum cans.

One of the challenges with not being niche along both dimensions is that I will never consistently satisfy those who subscribe solely to read about a particular company or sector, which I was sure would foment lots of churn. But so far that hasn’t been the case. Hot sectors like SaaS and media register elevated interest, sure, but in general I have been surprised by how consistent readership has been from one writeup to the next (my post on Protector Forsikring, an obscure small cap P&C insurer in Norway, was far more popular than I would have imagined).

A more pressing concern with broad sector coverage is that there is no way I can be an expert on all or even most of the things I write about (some of you send emails asking me what’s up with such-and-such company that I wrote up in the past. If I don’t respond, it’s usually because I have no clue). When you subscribe to the work of Matthew Ball, Ben Thompson, and Bill Bishop, you are paying for expert opinions on media, big tech, and China. Unfortunately, I cannot claim a similar level of competence about anything really.

Another issue is that when folks assign your blog to the “investing” category, they often want a buy or sell recommendation plus the full pageantry of comp tables, DCF snapshots, etc. that they have been conditioned to expect (one prospective subscriber asked for a complete track record of how my stock picks from the blog performed). But as you know, I try not to play that game.

So then what are scuttleblurb subscribers paying for? Well, I find that most business analyses fall short in that they either list stats and attributes (“this is a good company because it is capital light, has low customer concentration, boasts an LTV/CACC of 6, etc.”) or devote too much real estate to showcasing Excel outputs (the soothing and often time consuming exercise of wiring an Excel model can sometimes lull you into thinking you understand a business better than you do). Very few analyze companies within the context of a broader ecosystem or offer more than a cursory discussion of competitive strategies and business models. Many are jacked up with sensational, lopsided claims.

It seems to me that investment research has assumed a more performative flair over time, as if the purpose of a write-up were to argue with great conviction and adamance for either the long or short side, as if conceding any point were tantamount to betraying your tribe, as if tribal devotion to an investment hypothesis weren’t utterly ridiculous. For some funding sensitive stocks like Burford Capital and maybe Tesla, each side may be trying to seize control of the narrative to reflexively provoke certain outcomes, but I think in most cases people just feel pot committed to their priors and get emotionally hijacked by opposing views. You might try nudging yourself closer to reality by reading the opposing arguments side-by-side with an open mind, but doesn’t it seem odd to think that combining two distorted but contrasting views should somehow blend to an informed, nuanced perspective? The problem is that both sides are so charged in their clamor for territory that neither leaves any neutral ground on which to apply independent judgment, and so rather than evaluating the merits of the company, you instead find yourself assessing the rhetorical strengths of two disagreeing raconteurs.

I hope you find scuttleblurb to be a welcome reprieve from the melee, a place where you can find sober, clearly articulated posts about what a company does, how it competes, and how it creates and claims value in its ecosystem. I don’t always get it right but, without the pressure to pitch or to maintain the appearance of expertise, I can fess up to mistakes and explore ideas with sincerity. I try to stay humble about my state of knowledge and offer arguments as an informed amateur groping for good explanations. I believe my readers have come to expect this approach from me as much as I demand it of myself.

Someone once said you get the customers you deserve. If that’s true, then I consider the quality of scuttleblurb’s subscriber base to be the finest compliment I could hope to receive. Thanks for all your friendship and support this year. I had the pleasure of meeting some of you in person this year and I hope to meet many more of you in 2020.

Until soon,

Dave

[FISV, GPN, FIS, SQ, Stripe, Adyen] On payment processors, distribution, and technology: Part 4 of 4

Posted By scuttleblurb On In [FIS] Fidelity National Information,[FI] Fiserv,[GPN] Global Payments,[MA] Mastercard,[V] Visa | Comments DisabledIf you follow the payments space even casually, you are no doubt aware of the sequence of the mega mergers that have taken place between merchant acquirers and core processors this past year. Source: scuttleblurb and public filings The bolded companies in the table above are the surviving merged entities, their market caps and enterprise […]

[FISV, GPN, FIS, SQ, Stripe, Adyen] On payment processors, distribution, and technology: Part 3 of 4

Posted By scuttleblurb On In [FIS] Fidelity National Information,[FI] Fiserv | Comments DisabledHaving covered merchant acquirers and card networks, I’m down to the final part of the payment edifice – issuer processors. Issuer processors are to card issuing banks what merchant acquirers are to merchants. They authorize and process transactions on behalf of card issuing banks under long-term agreements and recognize revenue based on a combination of […]

[FISV, GPN, FIS, SQ, Stripe, Adyen] On payment processors, distribution, and technology: Part 2 of 4

Posted By scuttleblurb On In [ADYEN] Adyen N.V.,[FI] Fiserv,[GPN] Global Payments,[SQ] Square | Comments DisabledIn the payments space, the difference between a distributor and a competitor can be so blurry as to lose functional meaning. Mercury Payment was a significant ISO for legacy acquirers before Vantiv acquired it from Silver Lake. Stripe offers many of the same services as the legacy acquirers to whom it outsources transaction processing. Ditto […]

[FISV, GPN, FIS, SQ, Stripe, Adyen] On payment processors, distribution, and technology: Part 1 of 4

Posted By scuttleblurb On In [FI] Fiserv,[GPN] Global Payments | Comments DisabledThis is Part 1 of what I expect will be a 3-part series on merchant acquirers and issuer-side payment processors [edit: 4-part series!]. It describes the basic mechanics of the merchant acquisition model and delves into how incumbent players like First Data, Global Payments, and Worldpay got to where they were prior the flurry of […]